Every GTM vendor in B2B SaaS now uses the word "agentic." It shows up in pitch decks, product pages, and analyst briefings. What it actually means varies enormously.

Some platforms have built genuine autonomous execution. Others have added a language model on top of existing workflow automation and updated their positioning. For revenue leaders evaluating these platforms, that gap is not academic. A team that picks a workflow tool expecting agentic behavior will set pipeline targets against capabilities the platform cannot deliver. Budgets go toward automation that runs tasks but cannot adapt to buyer behavior. That cost adds up across quarters.

The problem is not a shortage of vendors. It is the absence of a structured evaluation method built for this category. Traditional vendor assessments rely on feature checklists and RFP scoring. Those tools were built for products where the question is "does it do X?" Agentic platforms need a different question: "How well does it think, decide, and adapt?"

This scorecard answers that question across six criteria. Each one defines what genuine agentic GTM capability looks like, and how Tapistro's architecture delivers against it.

What Is an Agentic GTM Platform?

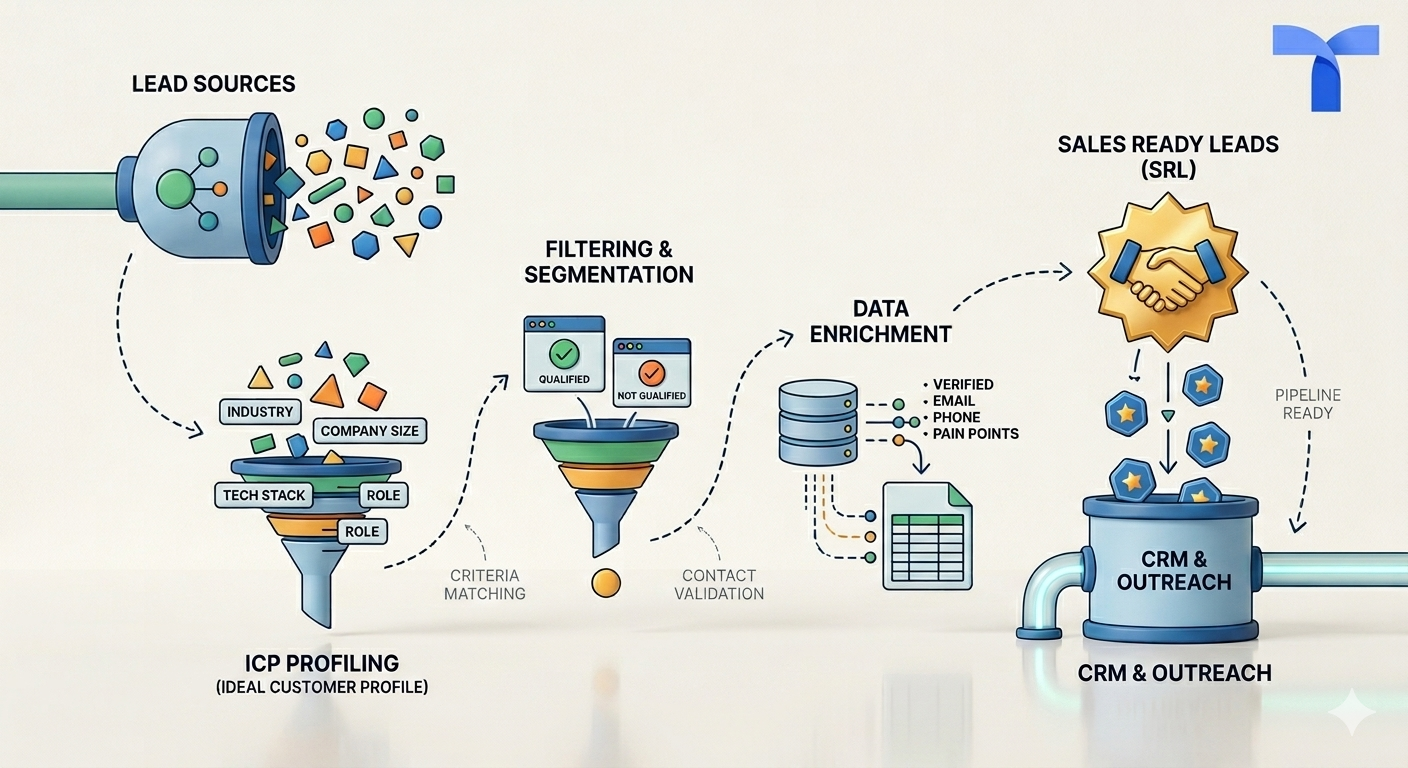

An agentic GTM platform is an AI-driven system that does more than execute predefined tasks. It makes decisions, responds to live buyer signals, and coordinates action across channels without requiring human input at every step. Unlike traditional automation tools, a genuine AI GTM orchestration platform learns from outcomes and continuously improves its own performance.

The core distinction: workflow automation follows rules. An agentic platform reasons about context, picks the right action, and adjusts when conditions change. If a prospect visits your pricing page twice in one week, automation sends the next scheduled email. An agentic system compresses the cadence, shifts to a higher-intent channel, and alerts the right rep with full context attached.

Tapistro is built as a gtm orchestration platform with native intent signal orchestration, real-time account enrichment, and AI-powered sequencing across the full GTM motion.

Why Traditional Vendor Evaluation Fails Here

Standard vendor evaluation follows a familiar pattern: compile a feature list, issue an RFP, score responses, run demos, compare pricing. This works well for CRMs, marketing automation platforms, and analytics tools. It breaks down for agentic platforms.

Feature lists cannot capture how autonomous a platform actually is. Two vendors might both claim "AI-powered lead scoring." One updates its model monthly. The other recalibrates continuously based on every closed-won and closed-lost pattern across your active pipeline. The feature list entry is identical. The impact on your team is not.

Demo environments also hide real problems. Vendor demos run on clean datasets with predictable signal patterns. What matters is how the system performs when signals conflict, data quality degrades, or buyer behavior deviates from expected patterns. Demos almost never test for these conditions.

Most evaluation criteria were also designed for tool-layer products. Questions like "does it integrate with Salesforce?" or "can it send multi-channel sequences?" only test whether a platform executes predefined tasks. They do not test whether the platform decides which tasks to execute, when, and why. That is the distinction that separates [agentic GTM] from automation, and it is what this scorecard is built to surface.

Six Questions to Ask Any Agentic GTM Tool

Use these criteria in live evaluation sessions where the vendor demonstrates on real or realistic data. The diagnostic questions below are designed to reveal capability depth, not just feature availability.

1. Does it act before the buying window closes?

Signal ingestion breadth and speed

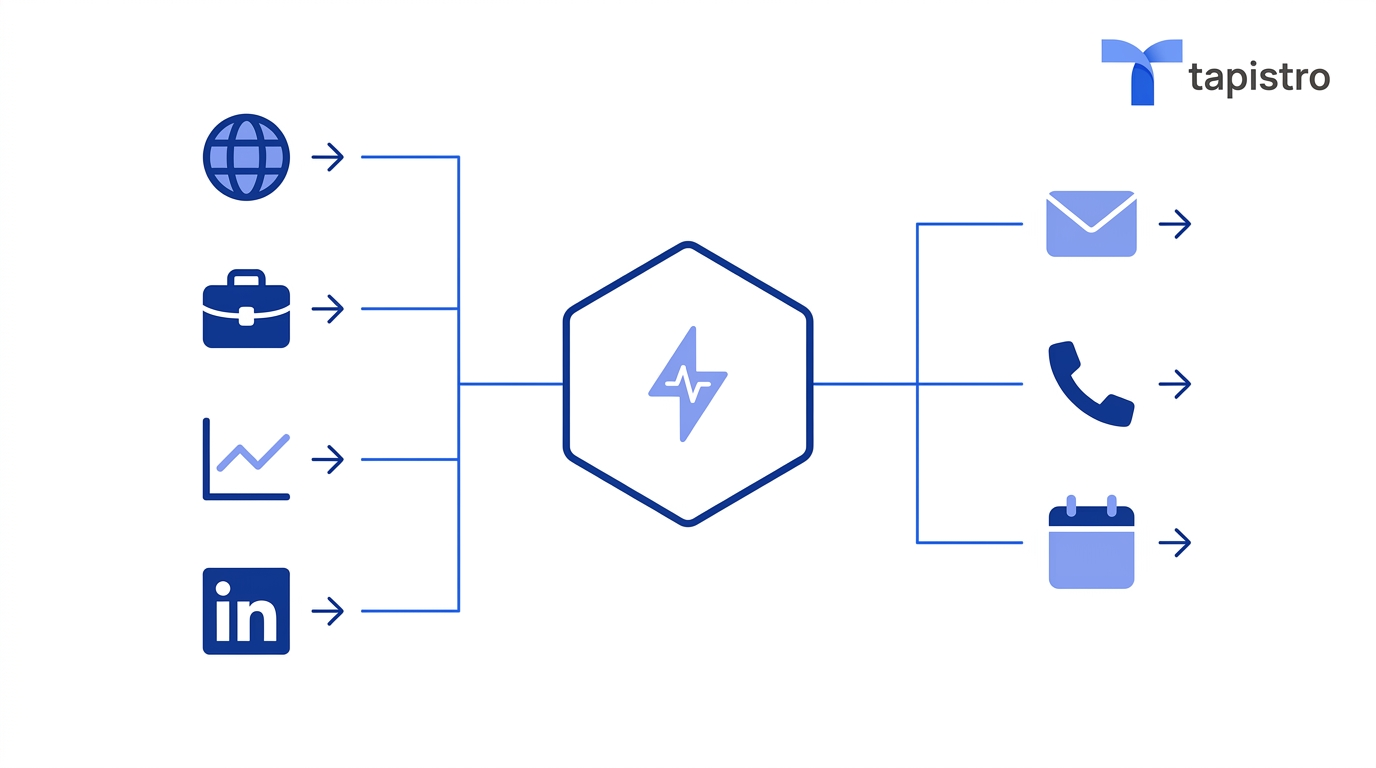

An agentic platform is only as good as the signals it can catch and how fast it acts on them. The evaluation here tests whether the platform ingests data from CRM systems, marketing engagement, website behavior, product usage, and third-party intent sources, and whether that ingestion happens in real time or through overnight batch processing.

A system that processes signals on a 24-hour cycle cannot respond to a prospect who visited your pricing page an hour ago. Real intent signal orchestration means the platform catches a signal and fires a downstream action in the same session window.

What this looks like in practice: Your target account's VP of Sales just spent 12 minutes on your ROI calculator. Nobody on your team knows. Tomorrow morning, the batch job runs, the CRM updates, an SDR sees it at 9am, and by the time they reach out the prospect has already had a call with a competitor. Signal ingestion speed is not a technical detail. It is a pipeline detail.

Diagnostic question: "Show me a signal that arrived in the last 60 minutes and the action it triggered."

How Tapistro delivers: Tapistro captures signals from G2, social, website visits, third-party intent providers about 100+ sources as they happen. These signals do not queue overnight. They flow into the platform in real time and immediately feed the decisioning layer. When a target account visits your pricing page or spikes in research activity, Tapistro registers the signal and can trigger a downstream action within the same session window.

2. Can you see why it's doing what it's doing?

Decisioning transparency

If the platform prioritizes Account A over Account B, your team needs to know why. If a sequence changes mid-execution, the logic should be auditable. Black-box decisioning might look fine in a demo. In production, it erodes trust and makes it impossible to know what to improve.

What this looks like in practice: An AI tool scores an account at 87 and your SDR reaches out, but the deal goes nowhere. Three months later, a similar account scores 62 and nobody touches it, even though the company just posted two VP of Sales jobs and their CEO just mentioned "consolidating our tech stack" on LinkedIn. If you cannot see what the model is weighing, you cannot fix it.

Diagnostic question: "Walk me through why this specific account was prioritized over that one."

How Tapistro delivers: Tapistro's Unified ICP surfaces the specific signals, firmographic attributes, and behavioral patterns that drive each prioritization decision. RevOps teams can see exactly which combination of intent signals, engagement velocity, and ICP fit criteria moved one account above another. When a scoring model needs adjustment, the inputs are visible, not buried inside a proprietary algorithm.

3. Does it coordinate across channels, or just send more messages?

Cross-channel execution

Genuine agentic GTM means coordinated action across email, LinkedIn, CRM, advertising, and content delivery from a single decisioning layer. The test is whether these channels operate as one system or whether the platform hands off to separate tools for each one. Handoffs create latency, data loss, and coordination failures.

What this looks like in practice: A prospect clicks your email, visits the case studies page, and then gets a LinkedIn connection request that opens with "I noticed you've been exploring solutions like ours." If the person sending that LinkedIn message had no idea about the email engagement, it is a lucky coincidence, not a system. And it usually does not feel lucky to the prospect.

Diagnostic question: "Show me one account's Journey across three channels in the last week."

How Tapistro delivers: Tapistro's Journey Canvas manages cross-channel signal orchestration from a single unified layer. Every touchpoint draws from the same account intelligence. When a prospect engages with an email, the LinkedIn follow-up reflects that engagement. When they visit the pricing page, the CRM updates and the next outreach adjusts. The account narrative stays coherent across every channel because every channel reads from and writes to the same orchestration layer.

4. Does it know when something changes, and act on it?

Context-aware Journey routing

Most outreach tools run on a fixed schedule. A prospect replies to your email, and the next message still goes out two days later, completely ignoring the reply. They download your pricing guide, and nobody knows. The sequence keeps running because the tool has no awareness of context outside its own queue.

A genuine agentic GTM platform treats the account as a persistent object. Everything that happens to that account, across every channel, is context. And that context should determine what happens next.

This is what separates real warm outreach automation from scheduled messaging. The platform does not just run steps. It reads the situation and routes accordingly.

What this looks like in practice: A prospect replies to an outreach email saying "send me more info on pricing." In a standard tool, that reply sits in the rep's inbox while the next automated email goes out two days later asking if they have ten minutes to chat. The prospect sees two disconnected messages and assumes nobody read their reply. In Tapistro, a reply is a signal. The platform captures that context, exits the prospect from the current Journey, and enrolls them in a separate Journey built specifically for warm replies, with messaging and steps designed for a lead that has already engaged. If you also have a Journey running LinkedIn outreach for that segment, the account gets enrolled there too, carrying the full context of the email exchange. Nothing is siloed. Nothing repeats.

Diagnostic question: "If a prospect replies to an email mid-sequence, what happens to the rest of their outreach across all channels?"

How Tapistro delivers: In Tapistro, Journeys are purpose-built flows for specific contexts: a cold outreach Journey, a warm reply Journey, a LinkedIn engagement Journey, a high-intent inbound Journey. When a lead takes an action, such as replying to an email, booking a meeting, visiting a key page, or engaging on LinkedIn, Tapistro captures that as context and routes the account into the right Journey for where they actually are. The account carries its full history across every Journey it moves through. That means your personalized outreach is always built on what the buyer actually did, not on what a sequence calendar assumed they would do.

5. Can you control where it acts on its own?

Human-in-the-loop architecture

Full autonomy without oversight is not the goal. The right agentic platform gives you configurable boundaries. RevOps leaders should be able to define exactly where the system acts independently and where it waits for human approval, by segment, deal size, action type, or channel. The system runs freely inside those boundaries and escalates when it reaches them.

What this looks like in practice: You are comfortable with the AI running outreach autonomously for SMB accounts. For enterprise deals above $50K, you want a rep to approve the message before it sends. For any account that was previously touched by a named AE, you want a manual review before re-engagement. If your platform cannot make those distinctions, you either over-automate into deals that should have been handled by humans, or you under-automate because you do not trust the system anywhere near your important accounts.

Diagnostic question: "Show me the controls a RevOps leader would use to set boundaries on autonomous actions."

How Tapistro delivers: Tapistro provides configurable guardrails that let operations teams define the autonomy envelope. Teams set boundaries on which segments the Autopilots engage independently, which deal sizes require human approval before outreach, and which channels are available for autonomous gtm execution. The controls are accessible to RevOps without engineering support. That means the guardrails evolve as the team's confidence in the system grows.

6. Can you prove what actually drove pipeline?

Measurement and attribution

Activity metrics show what the platform did. Outcome metrics show what that activity produced. A real agentic GTM platform should be able to connect a closed deal back to the specific signal that started the sequence, the touchpoints that drove engagement, and the actions that converted the account into pipeline.

"We sent 10,000 emails" is an activity report. "Signal-triggered personalized outreach to high-intent accounts generated 47 qualified meetings at 3.2x higher conversion than static sequences" is an outcome measurement. One helps you report. The other helps you decide where to invest next quarter.

What this looks like in practice: Your team ran a signal-based campaign for 60 days. Response rates were strong. But now you need to renew the budget and your CRO wants to know which signals drove the pipeline, not just which emails got replies. If your platform cannot trace a deal back to the original intent signal, you are defending a number without evidence. That is a hard conversation to win.

Diagnostic question: "Show me how you attribute a closed deal back to the signals and actions that influenced it."

How Tapistro delivers: Tapistro connects the full chain from the initial intent signal captured by an Intent Connector, through the engagement sequence run by an AI Autopilot, to the pipeline outcome tracked in the CRM. RevOps teams can trace exactly which signal started a sequence, which touchpoints drove engagement, and how that engagement became revenue. The measurement layer reports on impact, not volume.

How Tapistro Ranks

Tapistro was built around these six criteria from the start, not retrofitted to them. On signal speed, Intent Connectors process data from 70+ sources in real time, with no overnight batch processing. On transparency, the Unified ICP surfaces exactly which signals drove each prioritization decision. On cross-channel execution, the Journey Canvas coordinates email, LinkedIn, and CRM actions from a single layer. On context-aware routing, accounts are treated as persistent objects: when a lead replies, engages, or triggers a signal, they are automatically moved into the right Journey for where they are, with full context carried across every step. On human oversight, configurable guardrails let RevOps define the autonomy envelope without engineering support. On attribution, the platform traces pipeline outcomes back to the originating signal and every touchpoint in between.

Customers report a 70% reduction in manual GTM tasks after deploying Tapistro. The AI agents run 24/7, continuously enriching the Unified Prospect Profile and updating ICP fit scores as new signals arrive. The result is that gtm execution happens at the speed of buyer behavior, not at the speed of your team's capacity.

This is why teams that apply this scorecard rigorously tend to reach the same conclusion. Tapistro performs well under structured evaluation because its architecture was designed to answer exactly the questions this evaluation asks.

How to Run a Proof of Concept That Tests These Criteria

The scorecard guides evaluation. A proof of concept tests whether the capabilities hold under real conditions.

Start with two high-impact use cases that match your actual GTM motion. Inbound prioritization and outbound sequencing are strong defaults because they test signal ingestion, decisioning, and execution at the same time. Define success metrics before the POC begins, not after. Focus on outcomes: conversion rates on prioritized accounts, response rates on adaptive versus static sequences, time-to-first-action on high-intent signals.

Bring RevOps in alongside Sales and Marketing from day one. RevOps evaluates guardrails, data quality, and configurability. Sales validates whether the platform's account prioritization matches their judgment. Marketing checks whether cross-channel signal orchestration actually improves engagement rates.

The most revealing test is whether the platform improves during the POC window. A static system performs the same on Day 30 as Day 1. Tapistro's AI Autopilots learn from engagement patterns, [refine scoring models], and adjust execution based on outcomes. Performance should show measurable improvement over the evaluation window. If it is flat, the system is executing tasks. It is not learning. That difference defines the category.

The Evaluation Method Determines the Outcome

Teams that evaluate agentic platforms using workflow-era criteria will select workflow-era tools with updated branding.

This scorecard reframes evaluation around the six criteria that define real autonomous agentic GTM execution: signal speed, decision transparency, cross-channel coordination, adaptive sequencing, human oversight, and outcome attribution. Tapistro maps directly to each one. That is not a coincidence. It is the result of building for the criteria that actually matter in production.

The market will keep adding "agentic" to product positioning regardless of underlying capability. This scorecard gives buyers the method to test for operational reality. Apply it carefully. The right platform will welcome the scrutiny.

.png)

.png)

.png)

.png)

%20(1).png)